MooVita MooBus-7

(All images: MooVita, except where specified otherwise)

Rising sun

Rory Jackson unpacks how this Singapore-based enterprise is efficiently developing and deploying its safe, self-driving public transport bus across Asia

While much of the buzz throughout the self-driven vehicle world has historically focused on robotaxis, sized for autonomously transporting one-to-four people along sporadic routes, our investigations have revealed that other autonomous vehicle (AV) embodiments and markets can serve as far better proving grounds for scalable, useful road autonomy.

Buses, for instance, operate fixed routes on a regular basis, and their customers expect consistent quality service regardless of impedances from traffic, pedestrians, roadworks or other passengers taking too long to board. A high-functioning autonomous system must weather not just all of these factors across towns and cities, but also additional layers of problems such as GNSS multipathing, sensor hardware faults, misreads by machine vision systems and the risk of latency drops at intersections or other high-pressure, close-quarter situations.

Singaporean mobility company MooVita has run the gamut of AV types, yet wound up choosing autonomous buses as the focus of its business for reasons like those above. The company was founded by engineers and scientists from Singapore’s Agency for Science, Technology and Research (A*STAR), a government-funded institute tasked with commercialising R&D deemed critical for realising the Southeast Asian country’s strategic ambitions.

As Dr Ken Chan, vice-president for business development at MooVita recounts to us, “Our group, then at A*STAR, had been working on mobile robots and autonomous driving since 2008, and among other projects, we’d been a key contributor to Singapore’s Smart Nation initiative on intelligent mobility frameworks, striking significant industry partnerships with players like Continental and Baidu along the way.”

Afterwards, in 2016, the team spun-out MooVita as a company in its own right to continue R&D and commercialisation of its autonomous mobility competency, thereafter rewriting its self-driving software stack from top to bottom into a product that could be integrated onboard any vehicle with sufficient sensing and computing hardware for intelligent navigation.

“As our product was vehicle-agnostic, we spent our early growth years deploying and licensing the solution to various platforms, from low-speed shuttles to buggies, passenger cars and even large agricultural vehicles,” Dr Chan says.

“While it worked well on all of them, that strategy really divested us of any efficient use of our resources – to put it simply, it lacked focus. But as a fortuitous coincidence, the COVID-19 pandemic came very swiftly afterwards, which allowed us to observe and validate a hypothesis we’d already been considering internally: that public transport is so critical and vulnerable in functioning societies that it will undoubtedly be the first market for widespread AV adoptions.

“If ever a pandemic or other emergency should cause a lack of front-end workers for passenger and material mobility, people and resources must still be able to move around and autonomy is how you keep them moving. So, we decided in 2020 that shared and public transportation was the ideal beachhead market for us to focus on, before expanding into other areas.”

Success in that market would depend on having a physical bus that MooVita could put forward, both as a product and as a technical demonstrator to interested parties. However, the company was determined to hone its capabilities as a small software and computing enterprise, and not take the lengthy, onerous route towards becoming a vehicle OEM.

Partnering with an established OEM was, hence, always a part of its roadmap. To that end, it canvassed many such companies from 2019 to 2020, finding King Long (a subsidiary of Xiamen King Long Motor Group Co., Ltd. in Fujian, China) to be the most well-positioned and amenable to its technical integration and demonstration plans.

The bus goes moo

The MooBus-7 (so-named for its length), now in its second generation, is based upon the Skywell NJL6700 BEV small electric bus, and has recently incorporated new features on driving performance, battery capacity, and hardware ruggedness across different environments and traffic conditions.

The NJL6700 – and thus, the MooBus-7 – is a 7 m long, single-deck, low-floor bus, with a maximum passenger capacity defined by 16 fixed seats, standing space for 14 more persons and additional space for one wheelchair. Its fully electric powertrain enables a 60 kph top speed and 180 km of travelling distance between charging stints.

“By and large, we’ve adopted our autonomous driving software stack and architecture to work with various commercial off-the-shelf compute and sensor hardware,” Dr Chan explains. “This is critical as these technologies advance so rapidly, with new generations from NVIDIA and the like being released and re-released every couple of years; if we’re going to remain scalable, our software needs to be deployable on a wide range of different systems. So, it’s our software that we’ve really put our hard work and optimisation efforts into.

“In our experience, the process of selecting a bus OEM is more challenging than it is for AV software companies to choose a passenger car OEM, owing to a few key reasons. For instance, cars are mass-produced in tens or hundreds of thousands, making them readily available so you can integrate into many units at once. But buses, traditionally, tend to be manufactured more along the lines of a just-in-time production approach. So, there can be limits to our ability to order and receive vehicle units, as and when we want them.”

MooVita’s choice of partners was further narrowed by its required accessibility of the low-level CAN on its chosen vehicle in order to integrate its software stacks for autonomous driving and control. Naturally, that bus would also preferably be built with drive-by-wire components installed, or else MooVita’s integration would need to include additional components such as actuators and microcontrollers to control the vehicle.

“On top of providing a broad portfolio of electrified passenger transport vehicles, our vehicle OEMs [Skywell, and Xiamen King Long in the previous generation MooBus-7] were also among China’s earliest players in autonomous mobility, having started their R&D into the field since the 2010s,” Dr Chan recounts.

“Our partnerships are greatly enhanced by their direct experience, which guided the development of their fully digitalised, electrified and drive-by-wire-ready bus models. Having a partner with such a deep understanding of the requirements for an autonomous mobility platform, along with the necessary manufacturing and engineering capabilities, makes our work considerably easier.”

The bus is factory-built with a driver’s cabin at the front – something that MooVita is happy to keep in place owing to ongoing regulatory requirements for the presence of a safety driver in much of the world. Notably, however, Skywell manufactures the bus in both left- and right-hand drive versions, enabling both it and MooVita to deliver vehicles to any country regardless of which side of the road they drive on.

“Skywell is also practiced in adapting to differences in ancillaries between markets; for instance, EVs here in Southeast Asia use CCS Type-2 charging ports, whereas in China they have their own GB/T or Guobiao standard,” Dr Chan says.

“So, it’s easy for them to integrate or enable modifications to that level when we need them, covering not just electrical systems but also electronics like sensors and computers.”

The sensor architecture used in the MooBus for perception, navigation and obstacle avoidance is built on a combination of 3D Lidars, all-weather radars and HD cameras. Additionally, while MooBus’ localisation utilises a conventional RTK-GNSS solution aided by inertial sensing, it is further enhanced and made safe through a feature-based localisation system, the latter functioning via the bus’ Lidars and cameras for both mapping and positioning.

“The cameras are distributed around the vehicle, both around its perimeter and at differing elevations, including at the top and mid-height at the sides; that gives it 360° perception and also clear top-down view of any ground-level objects – including passengers or pedestrians – to cover the most typical blind spots near the doors and bumpers,” Dr Chan explains.

“Meanwhile, Lidars are positioned about the vehicle’s top and the radars are at ground level. There are four radars overall, with three at the front and one in the back to ensure redundancy and correct scanning for oncoming traffic or obstacles ahead of the vehicle.”

Five Lidars are installed in total, with two mounted frontally and three mounted rearwards. The HD cameras are distributed with a concentration at the front, with 14 cameras overall around the bus.

Installed within the cabin (for easy access during maintenance or upgrading work) are a variety of CPUs and GPUs that process and fuse the raw sensor data to understand the situations around the vehicle, execute the algorithms that analyse these situations for actionable information, decide upon logical responses, and then generate driving command outputs that are assertive (while still compliant to traffic rules and functional safety regulations), comfortable and efficient as needed for punctual bus services.

Sensor sourcing

MooVita’s solution is hardware-agnostic. For this model, MooVita takes its Gigabit Multimedia Serial Link (GSML) cameras from Neousys, primarily for ruggedness and performance under various lighting conditions, and chooses Continental radars for being among the most established (and thus trustworthy, in their view) brands of automotive radar.

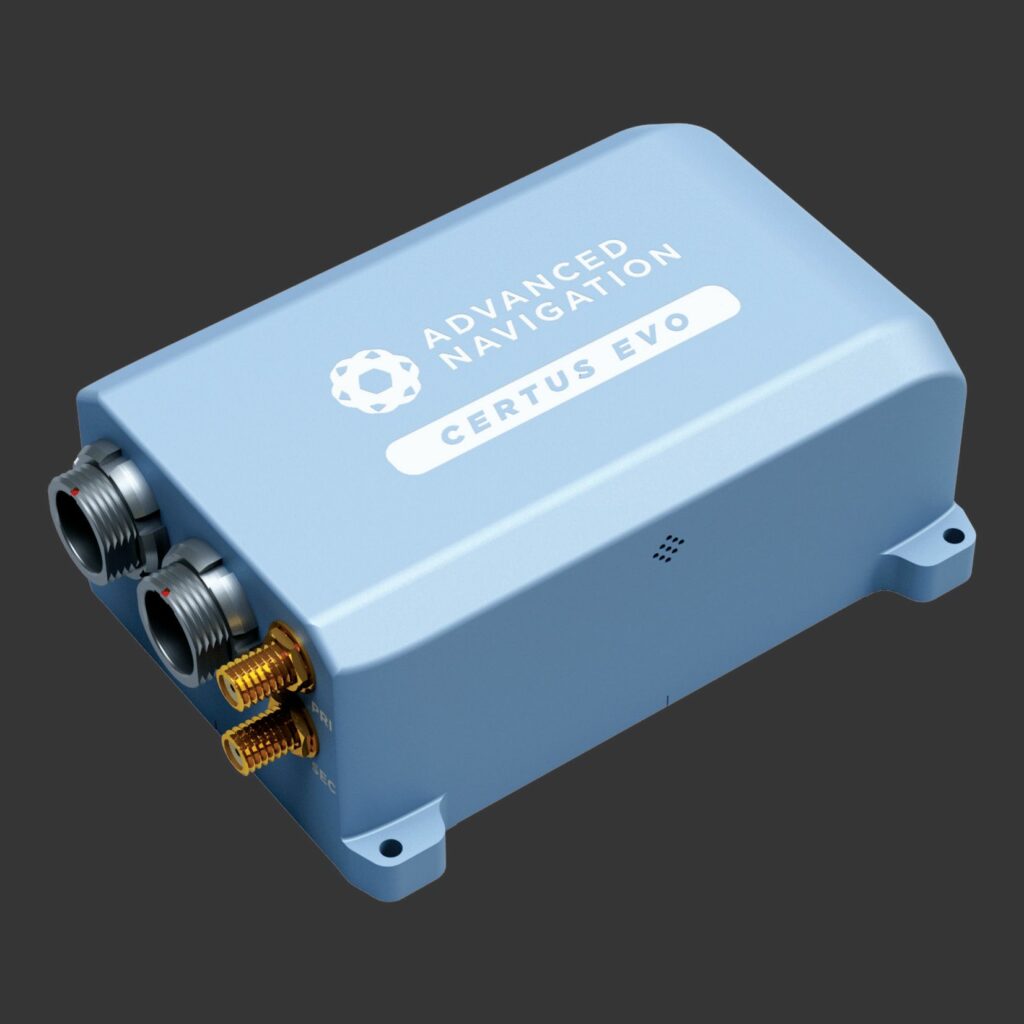

(Image: Advanced Navigation)

It took more direct trials and analysis for the Singaporean company to decide upon Hesai and Ouster for sourcing the MooBus’ Lidars. One vital capability it prized in both manufacturers’ solutions was their capacity to detect black objects, something that other Lidars had exhibited inconsistency over during development testing rounds, owing to how black objects inherently absorb more visible light (and thus reflect lasers more poorly) than non-black objects.

An inability to compensate for the low reflectivity of cars or electrical junction boxes with matte black paint finishes, or of buildings with black marble tiling or construction at ground level (to name a few examples) rendered certain Lidars as potential safety hazards in MooVita’s findings.

“Over our trials, we maintained communication with Hesai and Ouster, and learned about new capabilities that they were adding to their systems over time; not only was the pace of their advancement impressive, but the black object detection was one update that especially piqued our interest, as we heard about it around the same time that we were experiencing that exact issue with other brands,” Dr Chan recounts.

Layers of localisation

For its principal navigation needs, MooVita prefers the Certus Evo RTK-GNSS/INS, supplied by Advanced Navigation, based in Australia.

The Singaporean company has experimented with multiple INS providers but determined that Advanced Navigation’s system stood out for its reliability and consistent performance. A key advantage noted was the minimal integration and optimisation time required because the system typically achieved nominal operation immediately upon activation.

“Certus Evo’s demonstrated error tolerance is one of the best we’ve come across, and that still comes in an affordably priced package,” Dr Chan says. “Moreover, their after-sales support is frankly brilliant. I remember when the first MooBus prototype was launched and our team was still testing it, we were able to work with Advanced Navigation’s engineers to great benefit.

“We’d share our driving data and findings with them, and they’d quickly respond to our reports on the root causes of issues we were encountering, along with recommended changes to our software or hardware configurations to fix them. They’d even send us update patches to fix some of the problems, within very short turnarounds.”

The aforementioned feature-based localisation works initially via frontal Lidars and cameras taking in data that pertain, respectively, to the shapes and colours of roadside furniture and similar landmark features ahead of the MooBus. From there, MooVita’s algorithms extract the distance and attitude of such features relative to the vehicle, and so triangulate the position of the bus in three-dimensional space relative to the feature.

“That, combined with in-vehicle odometry, is used initially to assess the accuracy of the RTK-GNSS readings, especially when factors like multipathing or urban canyons might impede good GNSS signals. If the GNSS readings become inaccurate, the odometry and feature-based localisation will effectively bound the error, allowing the vehicle to accurately estimate its true position.

A significant benefit of collaborating directly with a vehicle manufacturer is that we acquire the necessary odometry readings straight from the bus. Had we constructed the bus ourselves or utilised a more basic platform, we would have had to select and source our own encoders and handle all the subsequent data conversions. By using a finished, proven vehicle, we avoid immense engineering time and effort in numerous small ways.”

Importantly, MooVita avoids depending on perception-based localisation alone, or using it as a primary input, noting that urban environments are subject to change, be it via roadworks, demolitions or changes to tree rows and similar vegetation features (be they planned or caused by sudden weather effects).

“Even something simple, like trees or hedges growing by some centimetres, can induce false readings in perception-based localisation algorithms,” Dr Chan says. “So, it’s not suitable to just use one form of position sensing, even if it’s something very advanced. It’s imperative to use multiple overlapping, complimentary sensors and multiple simultaneous means of localising.”

Two-stage sensor fusion

As indicated, data from MooBus’ sensors are used not only for localisation but also for perception, being vital to defining how the vehicle behaves and avoids causing collisions or annoyances throughout traffic scenarios, as is conventional in AVs – and additionally for application-specific scenarios, such as negotiating bus stops when they may be congested with people and other vehicles.

While the feature-based localisation depends on fusions of Lidar and camera data, MooVita adopts a two-stage, multisensor fusion approach to facilitate its behavioural perception processes, which leverages Lidar, camera and radar data.

That approach begins with an initial stage combining data from across two of the three sensor categories to give an initial perceptory overview of the surroundings. The third category of sensor data is layered onto that fusion in the second stage to give a ‘complete’ view of the world. This enables redundancy in two vital respects, the first being in terms of how the different sensors cover the weak spots of the others.

For those needing a reminder of the specific sensor principles at hand: radar functions persistently through inclement weather or poor visibility conditions, unlike Lidar or cameras, as well as providing better long-range detection capacity than the other two. Lidar, meanwhile, provides the best resolution, accuracy, depth and speed for object detection, while cameras detect zero depth data (except when integrated in a stereo configuration) but can be absolutely critical for capturing context-specific colours and other superficial details of objects, such as in traffic lights, road markers or on-road vehicles and personnel working for emergency services.

The second redundancy principle is that the second stage of fusion will detect most discrepancies in attitude, range or other sensor measurement parameters concerning nearby objects that may exist between different sensor groups’ readings. If all sensors are performing nominally, then they will all cross validate the detections of locations and distances of all objects around the bus – and if they do not (owing to faults, spoofing or other forms of cyberattack), the MooBus’ computers can determine through internal voting those readings that make the most practical sense and evoke the strongest integrity.

This two-stage fusion process is also being executed continuously, rotating through different permutations via which sensor data are fused in the first stage and added in the second to enable redundancy checks of the sensor data through different pathways. Hence, permutations of the fused sensor data are compared with each other over time to bound and isolate errors, such that the vehicle (or technicians located in MooVita’s remote operating centres) can determine the nature of any faults or attacks occurring, and of any ideal workarounds or fixes.

“Doing sensor fusion and integrity checks this way means MooBus’ computers are continuously handling a lot of data; fortunately, our in-house development philosophy puts a strong emphasis on the deeper parts of system engineering, particularly optimising latencies in the vehicle network,” Dr Chan explains.

“One thing that really helps there is that we have a unique way of determining how much data to sample and fuse. We don’t combine all of the data from all sensors in each fusion round because that would be too much data. Instead, we use an adaptive approach, embedding logic for identifying when to downsize certain samples but without compromising the accuracy of the resulting sensor fusion.”

Another example of MooVita’s in-depth system engineering pertains to image segmentation: the process by which a single image is partitioned into multiple, distinct and important regions to prevent errors such as several cars or pedestrians positioned behind one another being grouped as a singular car or pedestrian, respectively.

“We’ve worked quite intensively on our algorithms to try and ensure the system can always recognise separate objects as such; but when it can’t separate them, we at least make sure the incorrectly labelled group of objects can’t make the vehicle perform incorrectly or unsafely,” Dr Chan says.

“For instance, if a pedestrian stands too close to a pole, and the system – however unlikely – labels them collectively as a pole, our algorithm won’t just ignore the ‘pole’, but neither does it overwork our computing resources by double- or triple-checking whether every object has been labelled correctly or not.”

Instead, it merely tracks each labelled object for movement (a computationally less-intensive subroutine), and if one should move, the algorithms estimate its heading and velocity to identify if it merits any avoidant steering, slowing or braking on the bus’ part, just as it would with vehicles and pedestrians. Once again, the overlapping advantages of the different sensor types are key here. While cameras may lack depth of field in their vision, making object movements unclear at times, Lidars and radars (collectively) will constantly track object movements with relative ease and high fidelity.

AI training AI

While some companies are starting to use transformer-based neural networks to train their autonomy stacks, the object detection and classification algorithms downstream of MooBus’ sensor fusion were instead built using classic (as of 2026) convolutional neural network training. MooVita did, however, use transformer-based AI in the process of developing its driving intelligence, specifically in annotating much of the masses of fused image data used to train its algorithms.

“At the beginning, we did a lot of manual annotations, bounding and classifying objects we needed the system to recognise, but as the system evolved, we integrated AI into that process. As a result, common objects like pedestrians and vehicles no longer need to be annotated by us because our annotation system inherently recognises them in new images, draws boxes around them and annotates them more than consistently enough,” Dr Chan says.

“We step in when there’s a significant delta between what the system can and can’t infer; for instance, when we’re onboarding MooBus in a region where the road signs and markers aren’t in English. In those situations, we need to drive around ourselves, collect and annotate sufficient data for an initial training round, and once we’re able to start deploying on roads in semi-autonomous, in-the-loop or even just manual driving trials, the AI algorithm will kick in and enable transformer-based learning by collecting and annotating local imagery by itself.”

The company estimates that this approach has cut data annotation hours by roughly 30–40%, compared with how much longer the manual-only approach would have taken. Dr Chan notes also that “an army of engineers” had been needed for annotation before this, and so considerable labour has also been freed up for other development work, particularly quality control inspections of the data, algorithms and command outputs.

“And while we use rigorous traditional approaches to prevent our software and vehicle networks from being penetrated by hostile attackers, we’re also looking into how we might use generative AI to better detect indicators of hacking or spoofing and then also engage in protective behaviours,” he adds.

“The conventional approach of creating threat databases still brings quite slow, unreactive turnaround times between identifying and making countermeasures for threats, so we’re actively looking into advancements in how AI can be used for more reactive cybersecurity.”

Lift & coast

While programming for safety is important in any AV’s driving behaviour, buses and other large public transport vehicles must go a step further in order to achieve actual ride comfort for both standing and sitting passengers.

To manage that, MooVita has optimised its algorithms for perception analytics and, hence, for generating control outputs around predicting how other drivers around each MooBus-7 are likely to behave. It is from that paradigm in particular that the bus adjusts its accelerations and decelerations in order to minimise jerk (the degree to which passengers can suddenly be pushed forwards or backwards by the bus speeding-up or braking especially sharply) and thus maximise comfort for both standing and seated passengers.

“And we have significant enough experience of other AVs to know that many of them are programmed with a single braking profile,” Dr Chan comments. “If, for example, a pedestrian should step out in front of them, they will brake with the same level of force and pressure, regardless of how far ahead that pedestrian is or how fast they’re moving.”

This means that most AVs will ignore whether a gentle deceleration might be all that is needed to avoid an accident, or if the pedestrian has plentiful time and distance to notice the oncoming vehicle and withdraw back from the road in time, or if they are already prepared to cross the road in a swift and safe manner before the bus comes too close to them.

“That’s not the case for us. Our navigation algorithm analyses the distance, velocity and intentions of pedestrians and road users when calculating how best to manoeuvre or decelerate in a way that’s comfortable for passengers and duly attentive to everyone around the MooBus,” Dr Chan explains. “And the same process is performed across the whole journey and all conditions, whether we’re on flat city roads, slopes or even crossing speed bumps.”

Developing that algorithm (along with those others discussed for autonomous driving, including MooVita’s two-stage sensor fusion, feature-based localisation and object classification systems) for the behaviour of drivers and pedestrians in each new region MooVita enters begins with a data collection phase.

This entails driving the MooBus-7 manually through its anticipated routes, with its full electronics suite actively gathering, fusing and analysing data as a baseline, both for determining practical behaviours in response to the particulars of each environment and for ensuring that the bus starts from a place of mimicking the behaviours of a trained human bus driver.

“Then, we start with trials of the bus driving autonomously using this baseline, which follows a fine-tuning session where we modify the self-driving profile to drive more appropriately in any scenario where there’s room for improvement,” Dr Chan says.

“Continued improvement thereafter comes from machine learning, and in the long run, MooBus’ driving behaviours are a combination of rule-based and AI-trained autonomy. That means that as the bus accumulates hours, mileage and fused sensor data across different scenarios, it adapts better to manoeuvring through different combinations of objects, including knowing when to drive assertively, as bus drivers must sometimes do for efficiency’s sake, while still being fully obedient to traffic laws.”

As for safety in terms of the bus’ own systems integrity, dual redundant actuators are installed for the steer- and brake-by-wire systems, and a series of safety thresholds are programmed into the autonomy stack (largely based on local traffic legislation) to define when MooBus must reduce its autonomy down to a degraded operating mode or pull over altogether should multiple simultaneous faults be detected.

Computer systems

MooVita’s choice of computer systems range from automotive-grade embedded systems to high-performance ruggedised industrial computers. One variant uses a model from Vecow’s range of AI computing systems, which integrates an Intel CPU and NVIDIA GPU. This is chosen by MooVita for its reliability and also for the latter company’s familiarity with such systems. The computer communicates over a network largely running the automotive-standard CAN J1939 protocol, with automotive Ethernet used in places where high speed and bandwidth are needed, such as data links between the sensors and computers.

“And as with the sensors, we deploy a ‘two out of three’ approach with the computers,” Dr Chan adds. “Three Vecow AI computers are installed in a distributed network, with processing of sensor inputs and AI algorithms happening on all three simultaneously. That means the vehicle’s behaviours and latencies aren’t dependent on one computer; there are backups if one goes down, and if one generates an error, it gets outvoted by the other two.”

To achieve its preferred distributed network architecture between the Vecow computers, MooVita utilises Data Distribution Service (DDS) as its preferred middleware, largely owing to its adoption by a variety of automotive organisations for core connectivity and safety-critical applications, although the architecture provides significant further advantages also.

As well as being developed for simplifying complex network programming, and being one of the few middleware architectures compliant with the safety standards, DDS utilises a publish–subscribe pattern for the sending and receiving of data and commands between network nodes, with packets being delivered from publishers (nodes providing data inputs) to subscribers based on the latter’s level of interest in the packet’s ‘topic’.

Such topics may range from brake temperature and battery cell health, to traffic light changes and pedestrian movements. Hence, the publish–subscribe approach of DDS enables practical prioritisation of data deliveries within the bandwidth and latency limits of automotive networks.

Powertrain & platform systems

The powertrain and many of the platform components are the IP and choices of Skywell; being unchanged by MooVita to create the MooBus-7 AV, details of these systems are surplus to the requirements of this feature. For those interested, however, the pack is a 127 kWh system from CATL integrating lithium iron phosphate cells, and the electric motor is a permanent magnet synchronous machine built by Skywell, capable of up to 160 kW peak power (or up to 80 kW of continuous output).

The division of responsibilities for platform systems comes down to a matter of control authorisation levels, with Skywell covering low-level details and MooVita handling the higher-level control. Skywell, hence, keeps its platform signal network open and accessible to MooVita so that the latter can layer its autonomy stack atop platform control to transfer authority for these from the manual driver to the AI computers.

“That includes having our software control the closing and opening of doors, as well as lighting and ventilation systems, as rarely as those come up or need to change during bus routes,” Dr Chan says.

Although there are discussions in some circles as to how much in-cabin monitoring should take place in autonomous transport vehicles, for the sake of real-time optimisations for passenger safety and comfort as well as tracking for potential criminal behaviour, this has not yet come up in MooVita’s R&D.

“But what we are actively investigating is how we might smartly use the in-cabin infotainment systems to help customers inside, or even pedestrians or drivers outside, communicate or interact with each MooBus,” Dr Chan says.

“With the absence of a driver onboard, there has to be some means of fielding questions or concerns the passengers might have along the route. Anticipating those and displaying appropriate route, weather, timing or news information will be necessary to give confidence to the public that the AV is not only safe but programmed to understand what they need. Only then can the general public be truly receptive to such technological adoption.”

As of writing, one large LCD screen is installed in the MooBus-7 cabin behind the driver’s seat, although MooVita plans to reconfigure the cabin layout to have multiple smaller screens instead for easier visibility from all seats and standing positions. The selection of information displayed is defined through MooVita’s own software.

In addition to cycling through standard route progress information, the display provides periodic reminders for passengers to wear their seatbelts and to hold on to handrails, which increase or decrease in frequency depending on the likelihood of impending accelerations, decelerations or jerks. MooVita is also working with partners to program location-based services, such as local incident alerts, event information and advertisements.

Remote support

While MooVita strongly adheres to a philosophy of engineering its AVs to function independently of smart supporting infrastructure, each MooBus-7 remains connected to a Remote Control Centre. That allows fleet management systems to keep track of each vehicle’s location, progress and condition in real time; it also means that teleoperation is always available as a fallback option in the absence of onboard human bus drivers.

“So, while all algorithmic processing is done onboard, we do need to maintain our Control Centre connection for operators to provide that final safety layer, and to monitor and affirm that safe navigation and other functions are being performed as needed,” Dr Chan says.

“But if that connection is broken, the AV doesn’t just stop in the middle of the road. It continues to operate its transport service, with the onboard perception, processing and other systems continuing to function and maintain safe vehicle behaviour with all necessary minimum risk manoeuvres.”

The connection is provided through a selection of industrial-grade modems, with Peplink among MooVita’s preferred providers of mobility routers capable of consistent 4G LTE/5G connectivity in urban environments, particularly for their track record of success and wide variety of security features. If V2I is available, the vehicle can connect to them via dedicated short-range or cellular-vehicle-to-everything communication.

Each Remote Control Centre is built around a server (either one on premise or in the cloud), which controls the teleoperation functions, a set of displays that facilitate human-machine interfacing with each MooBus and a console of control peripherals for simulated driving of the bus over the 4G LTE/5G connection if necessary (including steering wheel, automatic gear changing and auxiliary controls customised to be unique to each vehicle). Each controller can manage up to eight vehicles, data link bandwidth permitting.

“As for the teleoperation, Ottopia has been a vital enabler for us because they have years of experience developing such technology for various OEMs – their teleoperation platform allows us to identify exactly which scenarios the AV requires closest attention in, and accordingly to provide the controller or operator with notifications as to when they need to either be prepared to take control or provide some other input,” Dr Chan says.

“It’s not that the AV and our autonomy stack couldn’t do that themselves; it’s more that there are always edge cases when it comes to safe road autonomy, and the smart and predictive nature of Ottopia’s software serves as an extra pair of eyes that prevents those cases from causing issues.”

Across the milky way

With the MooBus-7 proven through its services in Singapore, Malaysia and China, MooVita plans to next introduce larger buses with increased passenger capacities into its portfolio, with the idea of 8.5 and

12 m MooBuses appealing particularly to the company’s VPs. That greater throughput will form the predominant theme of its scaling-up strategy, along with testing and integration of newer sensor technologies when prudent, and exploration of alternate AV applications, including middle mile logistics – with concepts for a MooTruck being actively formulated internally.

“We’ve recently opened a new office in Guangzhou, which along with our other China office in Tianjin will enable us to expand our presence throughout China, while also taking on more projects and customers in Singapore and Malaysia,” Dr Chan says.

“We’re also working to expand into other Asia–Pacific countries, including Japan, South Korea and Australia, with the Middle East to follow in our roadmap and, thereafter, we’re hoping to look into Europe’s markets as well. Of course, that means we’re hiring new personnel wherever we can and we’re also forming as many new partnerships with Tier 1 component suppliers – as well as AV end users – to keep our supply chains and production growing in step with the world market for autonomous transport.”

Key specifications

MooBus-7

Single deck bus

Fully electric

Central drive motor

Dimensions: 6990 × 2350 × 3140 mm

Wheelbase: 5.1 m

Operating speed: 60 kph

Maximum range: 180 km

Passenger capacity: 30

Some key suppliers

Platform: Skywell

Lidars: Ouster

Lidars: Hesai

Automotive radars: Continental

Industrial PCs: Vecow

CPUs: Intel

GPUs: NVIDIA

Navigation systems: Advanced Navigation

GSML cameras: Neousys

Data links: Peplink

Teleoperation systems: Ottopia

Battery: CATL

Electric motor: Nanjing Golden Dragon Bus Co., Ltd

UPCOMING EVENTS