Co-simulation tackles driverless accidents

(Image: Vayavya Labs)

Developers in India have used a combination of simulation and an accident database that allows autonomous system behaviour to be exercised under controlled yet realistic conditions, writes Nick Flaherty.

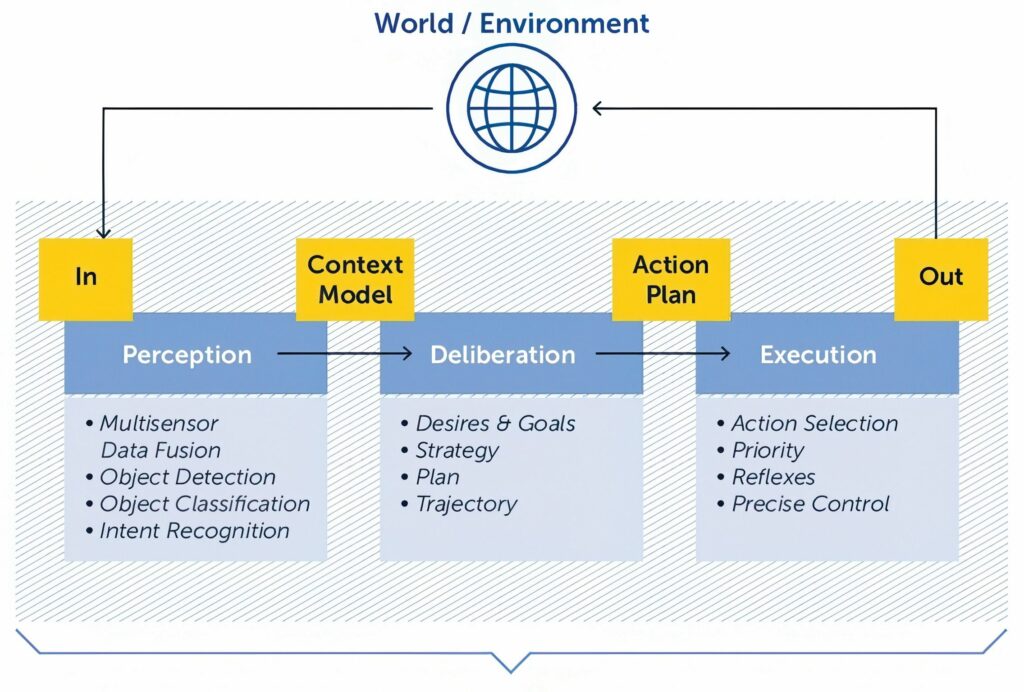

The framework developed at Vayavya Labs uses a production-grade end-to-end AI software stack with one or more physics- and sensor-aware simulation environment, alongside a scenario and orchestration layer that controls agents, environment dynamics and perturbations. Each component evolves independently but is time-aligned and behaviourally coupled. This allows failures to emerge naturally from system interaction rather than being artificially injected.

Combining co-simulation with accident-derived scenario intelligence allows a framework that is neither generic simulation nor overly prescriptive testing, but a practical bridge between real-world incidents and autonomy system validation, said Raviraj Inchal, lead engineer at Vayavya Labs.

Recent real-world incidents highlight that even state-of-the-art systems can fail in rare, ambiguous or poorly anticipated scenarios. These failures are not necessarily due to lack of intelligence but to gaps in scenario coverage, uncertainty handling and system-level validation.

Recent Waymo incidents demonstrate a recurring pattern in autonomous system failures, said Inchal. The system behaves correctly for extended periods and then a rare interaction or ambiguous situation emerges, causing the vehicle to enter a locally valid but globally unsafe behaviour pattern.

Such events are extremely difficult to capture through real-world testing alone owing to their rarity and unpredictability. Yet, they are precisely the scenarios that define public trust and regulatory acceptance.

Simulation allows engineers to deliberately construct and replay scenarios such as rare pedestrian behaviours, unprotected turns, sensor degradation and multi-agent interaction failures. What may take years to observe in the real world can be generated in hours.

Rather than replaying static scenarios, simulation enables parameter sweeps with slight variations in speed, timing, intent, environmental perturbations and agent behaviour uncertainty. This exposes fragile decision boundaries and non-linear failure modes.

“The objective is not to replace real-world testing, but to ensure simulation remains grounded in physical and behavioural reality,” said Inchal. “Within our autonomy safety efforts, we focus on co-simulation rather than a single monolithic simulator. This capability has evolved into a co-simulation framework that explicitly connects autonomy software, simulation environments and scenario intelligence in a coordinated loop.

“Our focus is not on advancing embodied AI algorithms themselves, but on validating their behaviour and safety through structured co-simulation and scenario intelligence. The aim is not to build yet another simulator, but to create a system-level validation layer that allows embodied AI behaviours to be exercised, observed and stress-tested under controlled yet realistic conditions.”

At the core of the framework is a structured scenario layer where scenarios are behavioural abstractions – not fixed recordings – and parameters such as timing, intent, occlusion, compliance and sensor quality are explicitly modelled. This means variants can be generated systematically to explore decision boundaries.

The accident database acts as a grounding mechanism for scenario design. Instead of replaying accidents verbatim, real-world incidents are analysed to extract causal patterns and interaction structures, then translated into parameterised scenario templates to prioritise simulation coverage based on real-world risk frequency and severity.

UPCOMING EVENTS