Gimbals

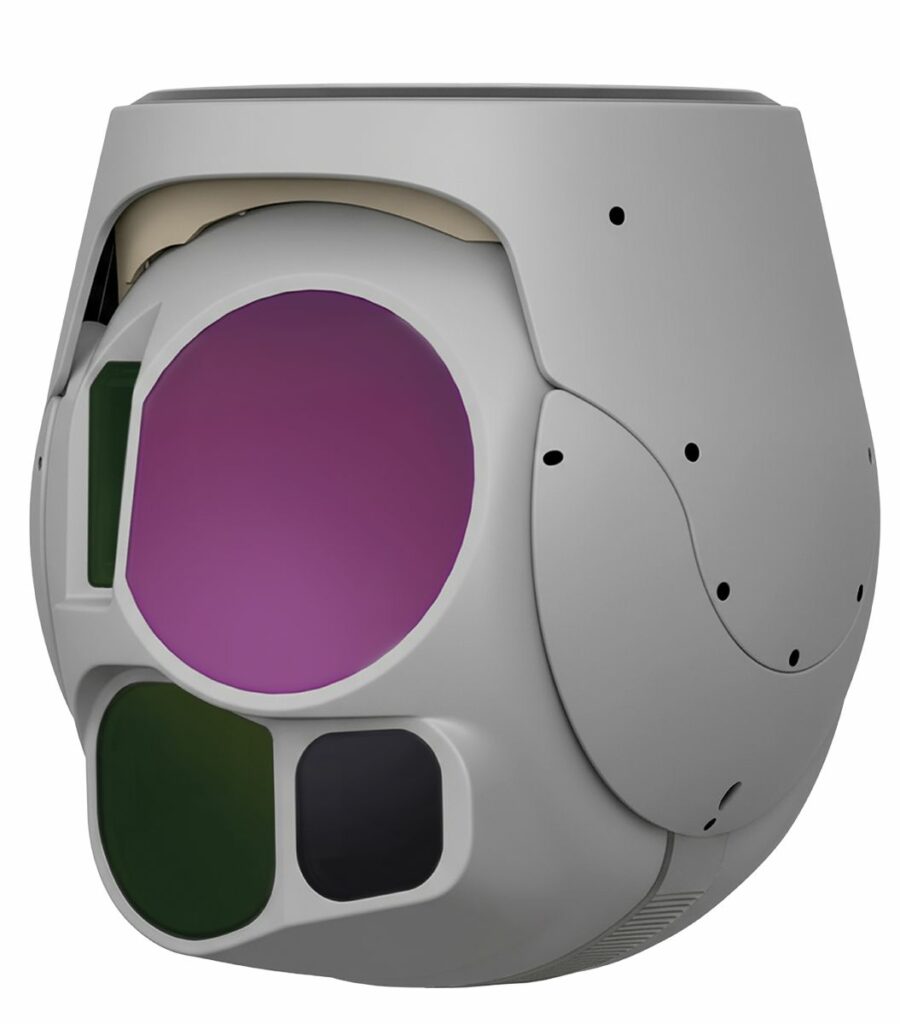

(Image: UXV Technologies)

A sharper eye

Rory Jackson unpacks how and why gimbals are becoming smarter, more open and higher-performing devices, as their SWaP signatures shrink and manufactured numbers grow

Whether you’re a customer, a systems integrator or a manufacturer, gimbals are a hard product to get right. These tightly-integrated systems are designed at the confluence of multiple different technologies, including cameras, computer processing, electromotion, thermal management, materials science, optics and AI software.

Advancements in any one of these disciplines are of great interest to gimbal customers (both the uncrewed vehicle integrators and the organisations seeking the actionable data that gimbals put out). But whether new gimbal technologies are being integrated and used optimally, or even correctly, comes down to the gimbal engineers and their best practices.

But such engineers and manufacturers are challenged by how the market for high-end gimbals traditionally operates. Gimbals are expected to be expensive and long-lasting systems; so historically, most suppliers would not produce or sell them in bulk. UAV customers thus learned to be good at making a limited number of gimbals last, swapping one unit from one aircraft to the next as the first lands for maintenance and the other is prepped for take-off. On top of that, different customers can have quite different requirements: even within militaries, for instance, a naval customer might have very different needs in terms of sensor and enclosure ruggedness in their gimbals to one used by the land army, special forces, marines or so on.

Thus, most well-known manufacturers often produce gimbals that are highly-customised, with very high-end hardware to maximise their performance and lifespans – in batches too small to allow economies of scale – with units designed to attract as many customers as possible while still satisfying (and not falling short of) each customer’s niche requirements.

However, some other companies adopt an alternative strategy of constructing gimbals in high volumes from lower-cost, more widely-available subsystem hardware, within large but scalable production facilities to enable mass and tight margins while minimising risks of overcapacity or ROI shortfalls. Increasingly, such gimbals’ capabilities are defined not by dazzlingly high-end hardware, but by a combination of very robust, advanced software and openness in their architecture.

(Image: Hood Tech Vision)

This alternative approach means that their value can be added externally, improved procedurally and future-proofed via firmware updates, rather than being locked-in via hardware. Using lower-cost components and scalable, transferrable software architectures also enables a degree of freedom and economies of scale to produce more diverse ecosystems of specialised gimbals. Products ranging from those built around heavy laser target designators for controlling guided munitions, to micro-gimbals below 200 g that retain multisensor architectures while being easily swappable in and out of UAVs of varying size and configuration.

Managing either approach requires a balancing act that considers several factors. One of these, naturally, is constant engagement with customers over their changing and intensifying requirements, whether they concern perennial areas of optimisation like size, weight, power or cost, or more specific demands like more camera zoom, better machine vision techniques, improved vibration damping, or ruggedisation for extreme temperatures or other environmental hazards.

Another factor is maintaining a sizable supply network covering lenses, sensors, circuit boards, wires, connectors, electric motors, processors, heat sinks, housing parts, bearings and more. Doing so allows rapid access to – and integration and testing of – new subsystems as they enter the market, as well as quick acquisition of known subsystems when iterating a new prototype (and then putting it into production) to satisfy a customer request.

Furthermore, the quality of a gimbal often comes down to how well everything inside fits together, and control and flexibility over certain key parts means control and flexibility with the form factors of all the other subsystems. It follows that the highest-end gimbal manufacturers will invest in or maintain some strategically vital production capabilities in-house; being able to machine and hone one’s own optics, housings or heat sinks, for instance, enables faster prototyping and catering for custom demands, as well as the kind of in-house experimentation with different gimbal design techniques that leads to a more informed and capable engineering team.

But the optimisation of gimbals’ internal designs should not be considered as wholly separate from gimbal software engineering either. A gimbal manufacturer who can, for example, shrink down a complex AI analytics algorithm – from something originally written for a vast server rack on the ground – into something suitable for a processor card tiny enough to fit inside a gimbal housing, will unlock better latencies and data quality for their customers than those who can’t or don’t bother. Hence, a huge array of skilled engineers working together as a synchronised team is indispensable when producing high-standard gimbals, whether high- or low-priced.

With all of the disciplines that go into making gimbals suitable for critical uncrewed missions being so interconnected, from mechanical and electrical design fundamentals to downstream considerations such as software optimisations or designing for manufacture, it pays gimbal manufacturers to keep diverse skill sets in-house, while also watching for specific trends among both supplier offerings and customer requests as they emerge.

Subcomponent trends

SWaP-C, as a term, covers a broad area where gimbal customers will always demand: “More, more, more,” (to a more pointed degree than with most other UAV subsystems), for obvious reasons. A smaller, lighter, lower-power payload reduces weight and drag imposed on the aircraft, meaning longer flights, more data and thus increased revenue (with a lower price per unit then meaning increased profit).

Given the aforementioned importance of getting gimbals’ internals to fit together, maintaining the exact same capabilities in a package with reduced SWaP-C typically takes at least one of three different approaches. One is integrating new advanced payload sensors, electric motors, or housing and/or circuitry materials from leading suppliers, thereby retaining prior generations’ capabilities (in mechanical and electrical terms) within a reduced SWaP-C signature.

(Image: Gremsy)

Another is keeping in-house manufacturing over subcomponents critical to the gimbal’s SWaP-C and capabilities (lenses, housings and circuit boards in particular). That is important for customising them for niche requirements, but also for keeping parts compliant with valued industrial and defence standards, such as IP ratings, EMI or EMC ratings, or Mil-Std levels. It also enables year-round component optimisations such that granular improvements in their cost, power needs, size or weight can be stacked up into sizeable advancements over time.

Lastly, adopting new generations of image and video processing cards (where technology takes a great leap forwards every 2–3 years or thereabouts) can empower software advancements that offset or substitute for the highest-end mechanical and electrical hardware capabilities.

With customer demand for gimbal stability also having shot up over the past 3–5 years, that latter approach is increasingly leveraged for features such as digital zoom, image stabilisation and frame synchronisation, which can prove vital augmentations to existing, established practices (along with proprietary techniques guarded by a few leading manufacturers) for gimbal balancing and closed-loop motor control. Smart power management and thermal management via software-defined electrics and electronics are also proving highly desirable in the latest gimbal designs.

More specifically, gimbal companies are also experiencing significantly increased demand for laser designators. A long-established technology, their resurgence is attributable primarily to the growing spread of contested, GNSS-denied environments, in which the laser enables continued, reliable targeting and guidance amid the absence of satellite signals.

Small, well-stabilised laser targeting capabilities are hence vital among new gimbals in 2026. Key in standout solutions is the honing of their stability within SWaP-optimised packages and reliability. The latter quality means much the same as having a reliable camera for ISR: if the camera periodically deactivates, flickers out, or loses its focus or angle, the mission at hand could be deemed a failure, and so it is with lasers also.

And while smaller, lighter and cheaper laser systems are thus important for continued SWaP-optimisation of new gimbals, that pursuit is aided by (and running in tandem with) new SWaP advancements in IR camera cores. Both types of payload systems today are far smaller than they were 10–20 years ago, thanks to a combination of physics advancements enabling the same capabilities in a smaller package, and manufacturing advancements ensuring fine, detailed production and assembly of the arrays, cores and housings vital to such components.

Open access

A few particular advancements in gimbals have been key to ensuring their satisfactory operation among end users, once installed and running on their uncrewed vehicles.

One of these is the increased openness in architecture mentioned previously, through which gimbals can be directly interfaced, much as with any computer asset. This can mean offering an interface into the gimbal’s application layer such that additional software can be installed and run on it as needed, and also providing a comprehensive API through which the gimbal’s systems can be controlled, meaning that the end user is not locked-into using the manufacturer’s software.

Some manufacturers will go further still in their open-access strategy, with at least one opting to provide multiple levels of video APIs. Those can allow end users and integrators to work directly with corresponding levels of video depending on their expertise or mission application, from entirely unprocessed and raw video data to preprocessed video (such as that with minor, preliminary adjustments like moderate compression or corrections for colour and noise), or processed video right before it gets streamed out to operators on the ground or viewers elsewhere in the world.

Providing these with a gimbal means, for instance, that integrators with access to skilled GPU developers may institute highly unique, smart and actionable forms of analytics into the raw video data, potentially saving on transmission latencies, processor SWaP-C footprint, or other little hindrances to vehicle and mission optimisation. At the opposite end of the spectrum, integrators seeking faster (and more tried-and-tested) development tools and methods might just use known algorithms from, say, the OpenCV or YOLO libraries at a later interception (and reinsertion) point along the gimbal’s or vehicle’s video pipeline.

Such an approach enables open gimbals capable of functioning as a platform, for that growing array of companies producing highly effective AI software, but sorely needing an easier, more plug-and-play pathway to show uncrewed vehicle companies just how critically valuable their technology can be across all sorts of markets.

Continuous rotation

Another key advancement in high demand is continuous rotation functionality (specifically, 360° or further in the horizontal axis), which is being empowered through close selection and combination of slip rings, direct-drive BLDC motors and high-quality encoders to ensure seamless rotational motion without wires twisting, shearing or snapping from induced stresses.

A few key technical advancements can be found across each of these areas. Slip ring technology, for instance, is being boosted through extensive miniaturisation, the rise of wireless slip rings that overcome the friction and wear disadvantages of traditional brushed slip rings, and integrations with fibre optic cables for higher data speeds and bandwidths (along with very small sensors to monitor temperature, speed and general health of slip rings over time).

Additionally, although electric motors rarely see lasting transformative advancements, some new technologies for small BLDC motors are unlocking hefty weight and volume reductions, while also realising new, extremely precise and energy-efficient heights in gimbal controllability, through the production of slotless and highly copper-dense stator designs that offer great torque density, speed and dynamic efficiency, even for extreme gimbal applications.

(Image: UXV Technologies)

And some gimbal manufacturers are integrating customised encoders, leveraging inductive encoders, for instance, which give noiseless position data outputs at up to 16 bit resolution, rather than magnetic encoders that are limited to 12–13 bit resolution and are often subject to interference. On top of being useful for accurate monitoring and control during gimbal rotations (and, hence, the kinds of gimbal pointing accuracies vital for sensitive laser targeting and guidance missions), customising encoders can also be a handy pathway toward reduced gimbal diameters or increased available internal space, as newer, higher-cost encoders become available in smaller sizes.

Video processing advancements

While ISTAR missions in particular can benefit from gimbals that can maintain target lock, while either the uncrewed platform arcs around it, or vice versa, arguably more important still (for certain commercial users on top of the gamut of military customers) is the capacity for full-frame synchronisation between all of the cameras and sensors pertaining to each gimbal.

While achieving that takes some smart programming work along with exhaustive hardware-matching (and potentially lengthy conversations and interface standardisations between suppliers of accelerometers, gyros and cameras), it can mean getting the IMU’s accelerometer and gyroscope data outputs synchronised with each individual frame output by the cameras for very advanced image processing and targeting information. For instance, if such tight synchronisation were combined across a networked fleet or swarm of uncrewed vehicles in the air and at ground or sea level, it could mean real-time generation of strategic-level 3D models depicting complex targets, assets or battlefields with extreme fidelity.

At a more tactical or operational level, targeting becomes much more accurate and advanced, including the ability to track the relative poses persistently and accurately of both the gimbal and the target, and to output a dense optical flow that may empower newer targeting methods as a result.

Many of these advancements depend also on the new releases in image processing systems mentioned previously – including the carrier boards, the arrangements of chips mounted on their surfaces, and the immensely sophisticated algorithms being written into them (the latter often being the biggest differentiator between two companies’ UAVs and their capabilities). Gimbal manufacturers widely agree that onboard processor solutions have advanced more than any other component over the last few years, with processor cards getting smaller and lighter as their processing speeds, data bandwidths, power efficiency and more are improved simultaneously.

Such advancements have a growing share of the impact on new gimbal architectures; while IMUs and cameras rarely advance any of their performance specifications by more than 10% with new iterations, each new generation of video processor tends to be 2–3X faster than its predecessor.

That allows for more peripheral capabilities to integrate inside each gimbal, both for the functionalities that such processors bring, and for the greater leftover space in each gimbal for additional sensors that the processing algorithms can make use of. It also saves the end user significant infrastructural burden – such as maintaining their own server racks for post-processing on the ground, or even putting companion computers for running analytics elsewhere on the uncrewed platform – because data can increasingly be made actionable (and thus worthy of customer enthusiasm) at the edge, inside the very gimbal itself.

In addition to that improving the opportunities for leveraging camera data, standards for camera outputs have shifted notably in recent years. While one often saw analogue interfaces or older serial types such as Camera Link, most customers now expect compatibility with MIPI CSI-2 (Mobile Industry Processor Interface Camera Serial Interface 2), which is the same standard typically found in mobile phones and other high-end consumer devices.

(Image: Merio UAV Payload Systems)

MIPI CSI-2’s advanced properties (such as high speed and bandwidth, and low latency) make it a vital first requirement in enabling functional access to raw, unprocessed video data, and subsequent delivery of such data into the GPU for edge processing; the standard has thus been equally important, if not more so, than video processing in terms of empowering gimbals to serve as platforms for processing video and image data, on top of just gathering it. High-quality gimbal manufacturers are therefore paying close attention and ensuring they have the right software and hardware interfaces in place to accept MIPI CSI-2 as it grows in popularity for high-speed video applications.

Testing

Naturally, the addition of video processors and other high-power computing systems inside gimbal housings will incur more heat buildup and more costly losses if chips or boards are damaged from vibrational or environmental hazards. In heavy industrial and military applications, the attrition rate of gimbals can be painfully high, not only from the missions flown but also from the repeated g-forces of catapult launches and parachute-, hook- or net-based landings.

Rigorous and comprehensive testing loops, along with detailed, high-integrity simulations of new gimbal architectures, are therefore critical for ensuring correct thermal management (both active and passive), vibration damping, environmental ruggedisation and other important factors behind gimbal performance and longevity.

On top of established rate tables and environmental chambers commonly used for testing to military standards on shock, vibration, moisture ingress and so forth, some companies will design their own hardware- or software-based regimens for shaking gimbals at extremely high g-forces to profile and improve them with extreme launches and recoveries in mind.

Additionally, when testing for extreme climates, an apt manufacturer or laboratory will pay close attention to sensitive parts of the gimbal, such as the lenses and their alignments, given how thermal contractions and expansions might cause costly mechanical seizures among such moving sections.

And inevitably, some will hit a wall regarding how far certain testing-induced failures can be mitigated through preventative reengineering – but reaching such zeniths also shows gimbal manufacturers how unavoidable failures can be addressed through repairs or remanufacturing. Hence, those gimbal makers who engage in the most testing will also be the best-informed and -equipped when it comes to performing customers’ MRO duties, with short turnaround times, clear feedback and overall high quality of service (all being critical in the age where vehicle manufacturers and operators want an engaged ‘partnership’, and not just a hands-off, distant ‘supplier’).

In-house production

While no striking inventions have been made in gimbal manufacturing over the past several years, more producers of gimbals are engaged in vertical integration strategies, not only to enable quicker customisation, validation and manufacturing of new subsystems (like lenses, housings and circuit boards, as mentioned previously), but also to bring part prices down.

(Image: Gremsy)

Achieving this, through investments in machine tools, injection moulding equipment, autoclaves, lens grinding and polishing machinery and more, also means that gimbal designs need not be locked-in with suppliers’ cheapest, most mass-produced parts to reduce costs (because asking for a custom design always incurs a higher price).

In most cases, the diameter of the gimbal head is directly proportional to its weight, so being able to flexibly create a narrower head (by controlling one’s own housings, or even making new mounts to fit externally supplied parts like motors) is a vital pathway toward shaving 2–3 mm or 20–30 g off the finished gimbal.

A few dedicated manufacturers will go as far as blank-sheet production of not just their own lenses but also their own lens control systems for their gimbals’ EO cameras. This can yield new heights in speed, accuracy and space efficiency when done correctly. Such advancements are rarer on the IR side – although IR cameras and their germanium glasses rarely come with optical zoom in gimbal systems; hence, this is of no major concern (particularly compared with EO camera lenses, which are often the largest, heaviest moving part in any gimbal).

Quality control

While no quantum leaps in gimbal production have been seen recently, nor should be expected in the years ahead, a few key practices are vital to consistent, quality unit manufacturing.

Some of these are perennial across manufacturing of any electronic system bound for aerospace, maritime or ground vehicular use. For instance, keeping close and detailed documentation of every part, stage and process of assembly is vital for ensuring every member of the manufacturing team understands what is being done and why at all times. Such documentation may sound simple or boring, until one recalls that some gimbals consist of thousands of components, at which point complex production trees become a must-have for ensuring the correct personnel always execute the correct branches, using the correct workstations, tools, subassemblies and so on.

Unique to gimbals, however, is the difficulty of performing end-of-line quality control (QC). While one can easily plug a computer into each finished gimbal and ascertain whether everything seems fine from both electrical and electronic perspectives, the fact remains that each gimbal is a tightly-sealed ball (or other shape), and not easily opened or X-rayed to verify that every part is correctly and securely mounted in its place to guarantee long life and resistance to shocks and vibration.

On top of that, if something is found to underperform after plugging into the gimbal, that tightly-sealed and -integrated nature often means the unit must be subjected to a complete teardown and rebuild because a cascade of other faults or performance shortfalls can be induced in those parts connected to the offending subsystem.

Hence, QC checks and validation should be performed at every stage of assembly, with some gimbal producers even evaluating each externally supplied input component before it enters its storage rack – and at most other subsequent assembly steps – simply because that is safer and smarter than buttoning-up the gimbal only to risk an end-of-line teardown.

Mid-assembly evaluations of parts may range from simple visual inspections with applications of standard callipers, to in-depth laser meter tests to gauge subcomponent positions and clearances down to very fine fidelities. QC for more costly components like, for instance, cameras may involve in-depth analyses of their focal plane arrays or other core operative parts, before clearing the cameras for integration into gimbals.

At the more extreme (but quality-dedicated) end, some manufacturers go beyond purchasing QC testing equipment; instead, developing their own unique testing tools for checking whether optics are designed, polished and functioning correctly, or engaging in the highest industry standards and practices for traceability, such that any kind of fault ever found by any customer around the world can be tracked back to the process or part at its source.

Defined by software

While one can easily make the general prediction that future generations of gimbals will continue improving in terms of SWaP-C and performance to varying degrees (depending on longer-term requirements specific to applications, such as reliability, longevity or attritability), a few more specific expectations can be voiced regarding how the industry and its products are likely to evolve as humanity approaches and enters the 2030s.

(Image: Hood Tech Vision)

For one, as end users of gimbals get wiser and more experienced with how gimbals perform over the short and long term – manufacturers and end users of uncrewed systems both included – a growing awareness of how hard it is to judge gimbals from their product brochures becomes inevitable. Thus, judicious use or provision of digital twinning for simulating each gimbals’ performance in a realistic environment, or full-on provision of gimbals’ test units, or demonstrations of test flights with them, may well become standard expectations of the packages that gimbal manufacturers offer.

Additionally, now that vehicle manufacturers’ and operators’ eyes have been broadly opened to the edge processing power and intelligent capabilities offered by new image and video processing technologies, both fields of customers have a whole new area of which to demand: “More, more, more.”

Hence, customers across the autonomous space will want new types of intelligent capabilities, enhanced versions of old capabilities, and likely complex, overlapping combinations or permutations of multiple capabilities that such boards and algorithms can offer, for the increasingly apt end user.

It may not be enough for gimbals of the future to merely deliver full-motion video – more often, they will be expected to function as a development bed on which users may seamlessly install and run their software products, with ease- and speed-of-use by the software engineer becoming indispensable performance indicators. Hence, the best suppliers will recognise that the gimbal itself may need to serve as just the corporeal component of the finished system, and ensure that they have the right interfaces and computer systems in place to keep them readily usable in the age of AI.

Acknowledgements

The author would like to thank Nolan Ohmart at Hood Tech Vision, Steven Friberg at UXV Technologies, and Huy Pham and Tu Do at Gremsy for their help in researching this article.

UPCOMING EVENTS